AI Strategy for Business: The Complete UK Guide for C-Suite Leaders in 2026

In 2026, the divide between AI leaders and laggards no longer reflects technological capability—it reflects strategic clarity. While 35% of UK SMEs now actively use AI (up from 25% in 2024), many organisations are discovering that technology alone doesn't deliver transformation. What separates companies seeing measurable return on AI investment from those struggling with deployment chaos is a cohesive, board-aligned strategy that embeds artificial intelligence into long-term business growth.

This comprehensive guide walks C-suite executives, board members, and transformation leaders through every critical dimension of AI strategy: from defining clear business outcomes and governance frameworks to assessing organisational readiness and managing implementation risk. Whether you're building your first AI strategy or refining existing initiatives, this playbook provides the frameworks, benchmarks, and decision-making tools to position your organisation as an AI leader.

87%

AI Competitive Pressure

UK leaders cite AI as top strategic priority

3.5x

ROI Multiplier

With formal strategy vs. ad-hoc adoption

£2.5B

UK Government Investment

AI and quantum technologies March 2026

35%

Active AI Adoption

UK SMEs currently using AI tools

Sources: UK Government AI Investment Announcement 2026, Protiviti AI Governance Research 2026

The Bottom Line

Organisations with formal AI strategies embedded into board governance report 3.5× higher ROI than those treating AI as tactical cost reduction. Strategy clarity, not technology choice, determines success in 2026.

1. Why AI Strategy Matters Now More Than Ever

The conversation around AI has fundamentally shifted in early 2026. Twelve months ago, the question was "should we invest in AI?" Today, it's "how do we avoid falling behind?" UK business leaders are experiencing palpable competitive pressure—87% cite artificial intelligence as a top strategic priority for their organisation. But confidence is waning. UK business leaders' confidence in AI's transformative potential fell from eight out of ten in mid-2025 to just two-thirds by February 2026.

This gap between competitive urgency and confidence decline isn't a contradiction—it's reality. Organisations are discovering that adopting AI tools without strategy leads to fragmented implementations, budget waste, and minimal ROI. A CEO might approve ChatGPT access for teams to "improve productivity," only to find that without governance, skill development, and integration into defined workflows, the tools become expensive toys rather than strategic assets.

The March 2026 UK government announcement of £2.5 billion in new AI and quantum funding—including a £500 million Sovereign AI Fund—signals Government commitment to positioning the UK as an AI leader. But funding alone won't move the needle. Your organisation needs a strategy that channels investment into outcomes that matter to the business.

2. The Three Pillars of Enterprise AI Strategy

Sustainable AI strategy rests on three interconnected pillars: business alignment, organisational readiness, and governance. Neglect one, and your entire programme will suffer.

Pillar 1: Business Alignment

AI must serve clearly defined business objectives, not technology roadmaps. Leading organisations map AI use cases to one of three distinct value drivers:

Revenue Growth

AI enabling new products, personalisation, market expansion, or customer acquisition at scale. Example: generative AI for product recommendations, pricing optimisation, or lead qualification.

Operational Efficiency

Automation reducing manual work, accelerating processes, and lowering unit costs. Example: document processing, customer support automation, or supply chain optimisation.

Risk Mitigation

AI strengthening compliance, fraud detection, cybersecurity, and operational resilience. Example: anomaly detection, regulatory reporting, or predictive maintenance.

Organisations reporting high AI ROI engage boards on strategy at every meeting, with clear links between AI initiatives and financial outcomes. Only 13% of low-ROI organisations discuss AI this consistently. The pattern is unmissable: strategy clarity drives results.

Pillar 2: Organisational Readiness

Technology can be procured in weeks; culture and capability take years to build. Your organisation's readiness for AI spans four dimensions: data maturity, talent and skills, change management capability, and executive alignment.

Data maturity underpins everything. Can you access clean, labelled data at scale? Do you have data governance frameworks in place? Organisations lacking robust data foundations waste millions on AI tools that have nothing meaningful to learn from. Many UK enterprises discover they're 18 months behind on data preparation when they try to deploy ML models.

Talent and skills remain the most acute constraint. UK unemployment in AI roles sits below 1%, and competition for skilled practitioners is fierce. Your strategy must address this: do you hire, partner with consultancies, retrain existing staff, or build nearshore/offshore centres? Each path has different cost and timeline implications.

Pillar 3: Governance

AI governance has evolved from "nice to have" to boardroom imperative. Regulators are tightening frameworks. The EU AI Act is live, the UK is developing its own governance roadmap, and financial regulators globally are requiring AI risk disclosures. Organisations without governance frameworks face regulatory risk, reputational exposure, and operational chaos.

Your governance strategy must address model transparency, bias mitigation, explainability, audit trails, and accountability. For high-stakes applications (credit decisions, hiring, healthcare), governance isn't optional—it's the cost of doing business responsibly.

Navigating these three pillars requires specialist AI strategy consulting tailored to your industry and business model.

Discuss Your AI Strategy3. Defining Business Outcomes and Prioritising Use Cases

The most common AI strategy failure is starting with technology choice instead of outcome definition. Teams debate whether to build or buy, whether to use cloud or on-premise infrastructure, before anyone has articulated what problem they're solving.

Reverse the sequence. Begin with a clear answer to this question: What measurable business outcome will this AI initiative deliver? Reduced customer acquisition cost. Faster claims processing. Improved product quality. Better inventory forecasting. The outcome must be:

Financially Quantifiable

Tied to revenue, cost, or efficiency KPIs that matter to the P&L. "Improve customer satisfaction" isn't quantifiable; "reduce average support ticket resolution time by 40%, saving £2M annually" is.

Business-Owned, Not IT-Owned

The finance director, sales leader, or operations VP who owns the business area should sponsor the use case. If only IT cares, the outcome probably isn't strategically important.

Achievable in 12–18 Months

AI initiatives that stretch beyond two years lose momentum and executive attention. Scope use cases so you can show value and iterate within one calendar year.

Outcome First, Technology Second

Define the measurable business outcome before selecting tools. A poorly scoped ChatGPT pilot may deliver no ROI, while a well-targeted document automation programme could save hundreds of hours annually. The technology is secondary to clarity of purpose.

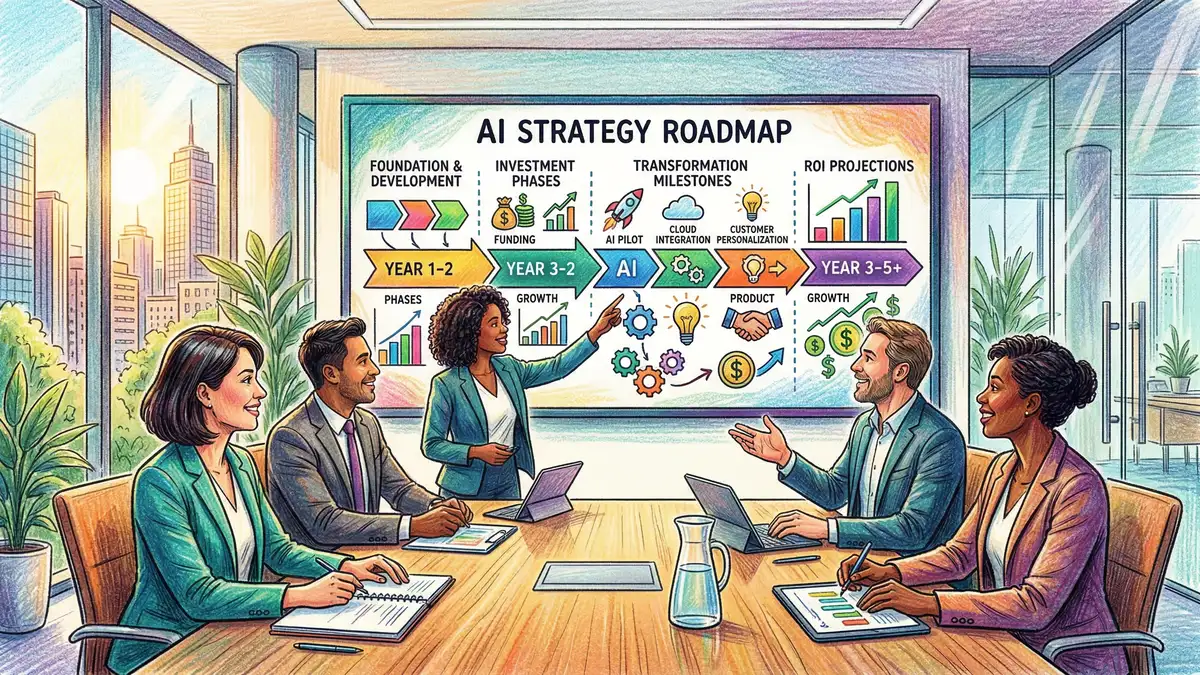

4. Building Your 18-Month AI Roadmap

A credible AI roadmap balances ambition with realism. It sequences initiatives by strategic importance, dependency, and execution risk. Here's the structure leading organisations are using:

| Phase | Timeline | Focus | Typical Outcomes |

|---|---|---|---|

| Foundation | Months 1–4 | Data readiness, governance setup, pilot selection | Board-approved strategy, data audit complete, governance framework live |

| Pilot | Months 4–9 | Proof of concept with 1–2 high-priority use cases | Validated business case, model performance metrics, team skills mapped |

| Scale | Months 9–18 | Production deployment, process redesign, capability scaling | Live systems delivering measurable ROI, team upskilled, next wave identified |

Sources: Gartner AI Maturity Framework 2026, McKinsey AI Adoption Survey

Notice the structure: foundation work in months 1–4 is non-negotiable. Many organisations skip this and pay heavily. Without clear governance, data validation, and executive alignment, your pilots will stumble. The foundation phase answers five critical questions:

- Are our data foundations sound? Can we reliably access the data needed to train and deploy models?

- Do we have executive alignment? Are the CEO and board committed, and have they allocated budget?

- Is our governance framework in place? Do we have policies on model explainability, bias testing, and audit?

- Who will own this? Have we appointed a Chief AI Officer or named an executive sponsor?

- What's our talent plan? Can we hire? Do we need external partners?

5. Data Governance and Managing AI Risk

Governance isn't bureaucracy—it's the framework that lets you move fast safely. Organisations without governance frameworks experience four recurring problems:

Regulatory exposure: Models trained on biased or unrepresentative data can fail disparate impact tests and attract regulatory action. The Financial Conduct Authority and UK Information Commissioner's Office are actively monitoring AI implementations in finance and data processing respectively.

Opacity and drift: Models deployed without monitoring degrade over time. Without clear documentation of model logic, training data, and retraining schedules, organisations lose the ability to explain decisions when things go wrong.

Proliferation and shadow AI: Without governance, teams shadow-deploy AI tools (informal ChatGPT instances, unsanctioned cloud models) that bypass security and compliance review.

Talent and skill gaps: Teams operating AI models without understanding their limitations and edge cases create false confidence in system reliability.

Your governance framework should address four pillars:

Model Transparency

Document training data, features, decision thresholds, and known limitations. Models should be explainable to business stakeholders, not just data scientists.

Bias and Fairness

Test models for disparate impact across protected characteristics. Set tolerance thresholds. Document mitigation strategies. Regularly audit performance across demographic groups.

Data Quality and Provenance

Maintain clear lineage of data used to train models. Version control training datasets. Establish data quality standards. Monitor for data drift post-deployment.

Audit and Accountability

Log model decisions, retraining events, and performance metrics. Establish clear roles: who approves new models? Who monitors performance? Who decides when to retire?

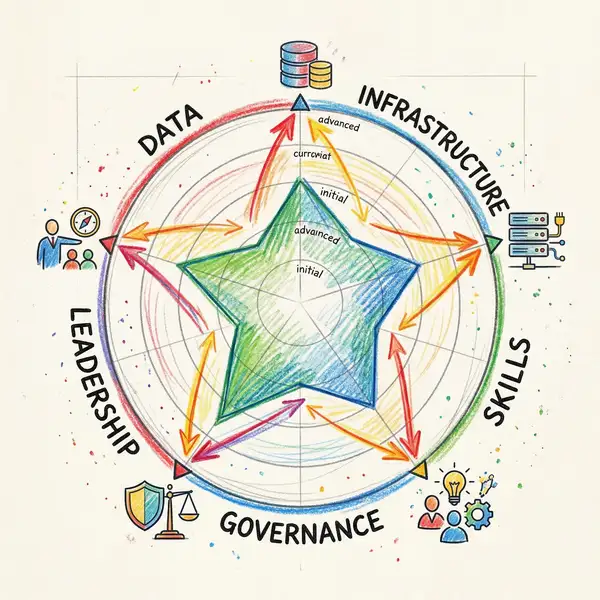

6. Assessing and Building Organisational Capability

AI success hinges on people, not tooling. Your organisation's readiness depends on four interconnected dimensions: data maturity, technical talent, business acumen, and change management capability.

Data maturity sits at the foundation. Without clean, labelled data at scale, sophisticated models are worthless. Many UK enterprises discover they're 18–24 months behind on data preparation when they begin AI initiatives. Your data assessment should map:

- Data accessibility: Can teams easily access needed data, or is it siloed across legacy systems?

- Data quality: Is data consistent, complete, and accurate enough for modelling?

- Data governance: Do you have policies on data ownership, retention, and lineage?

- Data volume: Do you have sufficient historical data to train models (typically 6–24 months)?

Technical talent remains the most acute constraint. UK unemployment in AI roles sits below 1%. Top practitioners command £150K–£250K+ salaries. Your talent strategy must consider: hiring directly, partnering with consultancies, building centres of excellence, or a hybrid approach. Each path has different cost and timeline implications.

Business acumen is often overlooked. Your technical team must understand the business problems they're solving. Many failed AI projects stem from data scientists optimising for the wrong metrics. Establish deep collaboration between technical teams and business sponsors from day one. Rotate talent across business and tech functions.

7. Building Executive Alignment and Board Governance

Research by Protiviti and BoardProspects reveals a staggering gap: organisations reporting high AI ROI discuss AI strategy at board meetings consistently. Organisations with low ROI discuss it sporadically. Frequency of board-level discussion predicts ROI better than most technical indicators.

This is because governance forces clarity. When the CFO, CEO, and board chair are regularly reviewing AI ROI metrics, governance frameworks, and risk status, the entire organisation aligns. You can't hide a poorly scoped use case or a compliance gap when oversight is active.

Your board governance model should include:

Quarterly Board Reports

AI ROI dashboards showing financial outcomes, governance compliance, risk status, and capability progress. Treat this as seriously as financial reporting.

AI Steering Committee

Monthly or bi-weekly meetings with CEO, CFO, and business unit heads making strategic decisions on use case prioritisation and resource allocation.

Chief AI Officer Accountability

Clear role with executive accountability for strategy delivery, governance compliance, and capability building. This role must report to the CEO or CFO, not CIO or CTO.

Risk and Compliance Integration

AI governance owned jointly by business and compliance. AI risk should feature in enterprise risk reporting, with audit committee oversight.

8. Common Pitfalls and How to Avoid Them

Leading organisations share patterns of success. Struggling organisations share patterns of failure. Here are the most common pitfalls:

Pitfall 1: Technology-First Decisions

The mistake: Boards approve large investments in AI platforms or consultancies before defining use cases and business outcomes.

The reality: Technology without strategy is expensive noise. Reverse the sequence: define outcomes, then technology.

Pitfall 2: Underestimating Data Work

The mistake: Teams expect to deploy ML models within weeks when they haven't validated data quality or completeness.

The reality: 60–70% of AI project timelines are consumed by data preparation. Budget accordingly; plan for 6–12 months of data work before model training.

Pitfall 3: Treating AI as an IT Problem

The mistake: CIO owns the AI programme; business units lack executive sponsorship or accountability.

The reality: AI is a business transformation programme that requires technology. Business leaders must own outcomes; IT enables delivery.

Pitfall 4: Skipping Governance "To Move Fast"

The mistake: Teams deploy models without documentation, audit trails, or fairness testing because governance "slows us down."

The reality: Governance enables speed by catching failures early and preventing regulatory exposure. Governance frameworks don't slow deployment; they accelerate it by reducing rework.

To avoid these pitfalls, establish clear decision gates in your roadmap. Every use case should pass a governance and business case review before funding. Every deployment should include documented testing for bias, performance, and explainability.

Frequently Asked Questions

Should we build AI models in-house or buy off-the-shelf solutions?

Most organisations pursue a hybrid: buy pre-built tools (ChatGPT, Microsoft Copilot, industry-specific SaaS) for commodity use cases (document processing, customer support). Build custom models for proprietary, high-value differentiators (pricing models for financial services, manufacturing optimisation). In-house build requires significant talent investment; buying provides faster time to value but less customisation. Your choice depends on competitive differentiation potential and available talent budget.

How long does it typically take to deploy an AI use case from strategy to production?

Mature organisations report 12–18 months for end-to-end deployment of a new use case (strategy, POC, scale). Foundation work takes 4–6 months; pilot takes 4–6 months; production deployment and optimisation takes 4–6 months. Organisations report faster cycles on second and third use cases (10–12 months) because data foundations and governance are established. Budget conservatively; many factors extend timelines: data quality issues, regulatory review, talent constraints, or scope creep.

What's the typical budget for an enterprise AI programme (first two years)?

Budget £1–3 million for a sustained AI programme across a mid-market enterprise (1,000–5,000 employees). Allocate roughly: 40% to talent (hiring, consultancies), 30% to data infrastructure and tooling, 20% to change management and training, and 10% to governance and compliance. Larger enterprises (10,000+ employees) see this scale proportionally. ROI benchmarks: organisations report average payback periods of 18–24 months on well-scoped use cases in revenue growth or operational efficiency categories.

How do we address bias and fairness in our AI models?

Start with representation testing: does training data represent the populations your model will serve? Conduct disparate impact analysis: does the model have different accuracy or approval rates for different demographic groups? For high-stakes decisions (credit, hiring, healthcare), use bias auditing tools and establish threshold tolerance. Document all bias tests in your governance framework. Consider fairness trade-offs: some fairness approaches reduce overall accuracy; have conversations about acceptable trade-offs with business sponsors. Schedule regular fairness audits post-deployment—model fairness can degrade as underlying populations or data distributions shift.

What happens if an AI model makes a harmful or illegal decision?

This is exactly why governance frameworks exist. Your response plan should include: immediate model suspension, thorough audit of affected decisions, notification of affected parties (regulatory requirement in many cases), remediation of any wronged customers, and root cause analysis (biased training data? Model drift? Logic error?). Document everything for regulatory review. The UK Information Commissioner's Office and Financial Conduct Authority have published guidance on AI accountability. Having an incident response plan in place before problems occur can mean the difference between a managed remediation and a reputational catastrophe.

How do we measure AI ROI and ensure accountability?

Define financial KPIs before deployment: cost savings in hours/money, revenue uplift from new capabilities, improved customer retention, reduced defect rates. Establish a baseline before AI deployment; measure change after. Common metrics: cost per transaction processed, revenue per customer served, defect detection rate, customer satisfaction. Track leading indicators (model accuracy, system uptime, bias audit results) and lagging indicators (business outcomes, ROI, user adoption). Report on both monthly. Assign accountability to a named executive sponsor who owns the outcome. No accountability = no results.

Ready to Build Your AI Strategy?

Your AI strategy is the foundation for competitive advantage in 2026. We help C-suite executives and boards develop and execute AI strategies that deliver measurable ROI, with robust governance and sustainable capability building.

Clwyd Probert

Managing Director, Whitehat SEO

Clwyd leads strategy and delivery for enterprise AI programmes across financial services, retail, and manufacturing. With 15 years in digital transformation and 8 years specialising in AI and ML governance, he advises boards on AI risk, capability building, and ROI measurement.

Explore the AI Transformation Cluster

This pillar article is part of our comprehensive AI transformation cluster. Dive deeper into specific aspects of your AI strategy:

Step-by-step process for mapping your 18-month transformation roadmap with realistic timelines and resource allocation.

Practical guide to identifying high-impact automation opportunities and deploying workflow automation at scale.

Technical and organisational frameworks for successfully implementing AI systems from pilot to production.

Building governance frameworks that enable compliance, risk mitigation, and sustainable AI deployment.

Structured approach to building AI literacy across business units and technical teams.

Evaluation framework for selecting the right AI tools and platforms to support your strategy.

Sources: UK Government AI and Quantum Investment 2026, Protiviti Board Oversight of AI Research 2026, McKinsey State of AI 2026, UK Information Commissioner's Office AI Guidance, Financial Conduct Authority AI Framework